Researchers at various institutions, including the Max Planck Institute for Computer Science and the Saarbrücken Research Center for Visual Computing, Interaction and Artificial Intelligence (VIA), have developed an AI-supported image processing method called DragGAN. This new method allows for flexible control over facial expressions, postures, perspectives, and other properties in photos using drag and drop. Unlike other photo editing programs, DragGAN only requires marking start and end points on a photo, and the AI follows the marked points to generate images that correspond to the desired changes. The researchers presented their new method at the computer graphics fair SIGGRAPH23 in August.

DragGAN uses a machine learning optimized Python library called PyTorch, though no hardware requirements are known yet. According to the lead author, Xingang Pan, source code for DragGAN is set to release in June. While the method is still in an early stage, the efficiency of the approach allows users to wait only a few seconds before continuing editing. DragGAN is meant to produce realistic results, even in difficult scenarios, but it may also hallucinate other content.

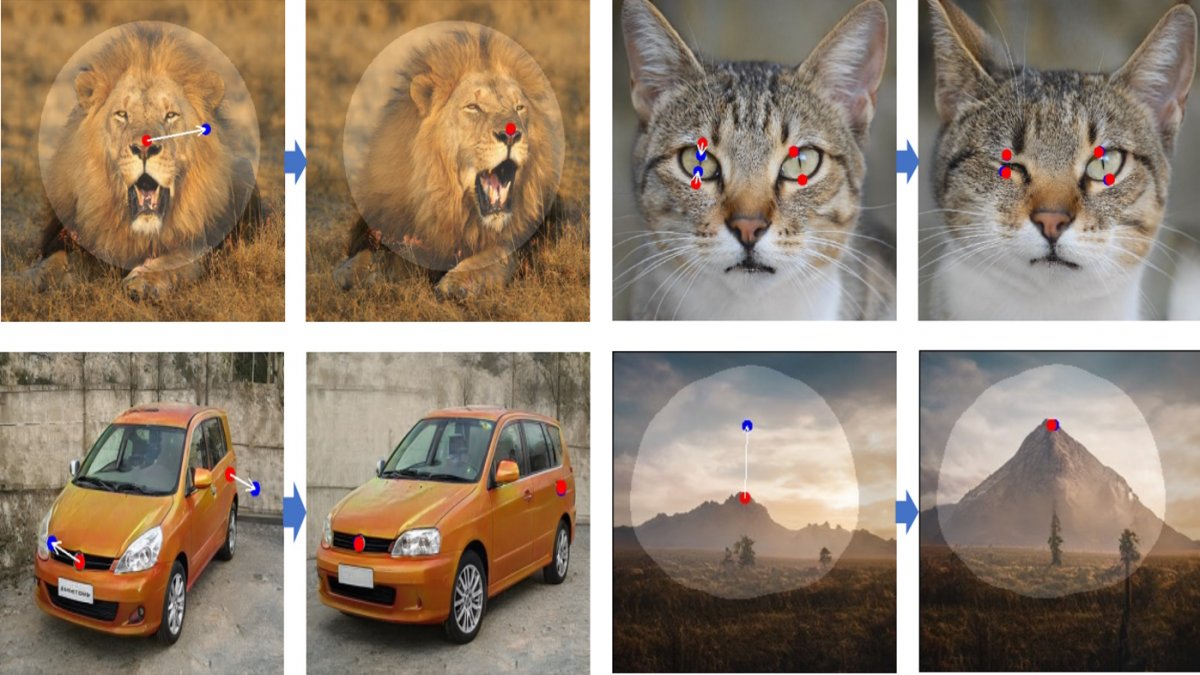

The researchers have not disclosed how well DragGAN works yet, but example videos are available on the website of the Max Planck Institute for Computer Science. Users can adjust facial expressions, posture, clothing length, and perspective using drag and drop. Masking can also be utilized in DragGAN to define regions that the AI may manipulate. In the example given, a dog has its head turned without the entire image perspective being adjusted. The future possibilities of photo editing are exciting with DragGAN on the horizon.