Google is continuing to expand its cloud capabilities with the introduction of new data centers. The company has said that its new systems could deliver up to 26 exaflops of AI performance, equivalent to 26 trillion operations per second. This will be available to customers via future A3 instances. Google has also announced that it is building A3 GPU supercomputers across the globe, each system being made up of Nvidia’s H100 GPUs and Intel’s fourth-generation Xeon Scalable processors.

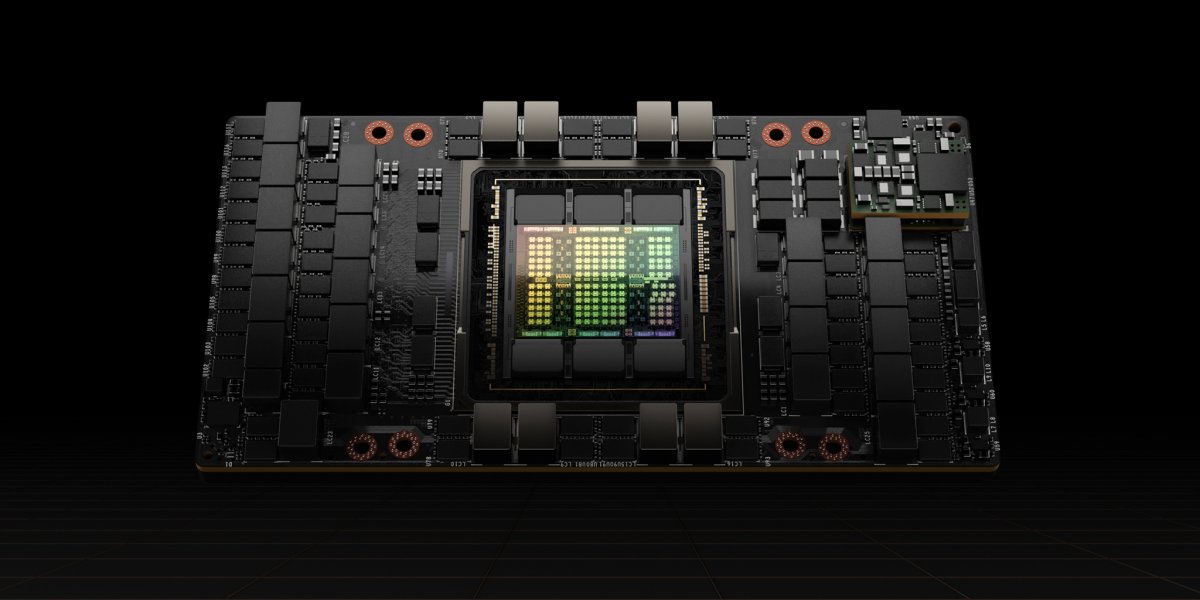

The new systems are based on Nvidia’s DGX100 and clusters will consist of eight H100 accelerators and two Xeon SP CPUs. Nvidia’s NV links handle communication between the GPUs, with Google using its own software stack. Meanwhile, the company’s custom network processors will be developed together with Intel to relieve the Xeon CPUs. Tens of thousands of H100 GPUs will be deployed in the larger data centres, and A3 supercomputers with up to 26,000 GPUs in a single cluster will be built for the larger customers.

However, competition among the fastest supercomputers still reigns supreme, with Frontier leading the current Top500 list with thousands of AMD Epyc processors and Radeon Instinct MI250X GPUs. 26,000 H100 GPUs would reach approximately 780 petaflops in FP64 format, but in reality, the performance could be lower due to such a large network. A fully equipped A3 supercomputer would comfortably occupy second place in the Top500 list.

As a private company, Google is unlikely to carry out a corresponding Linpack benchmark run to officially be recognised in the list. These new data centres and supercomputers demonstrate Google’s ongoing commitment to cloud expansion and development in order to remain a leading player in the industry.