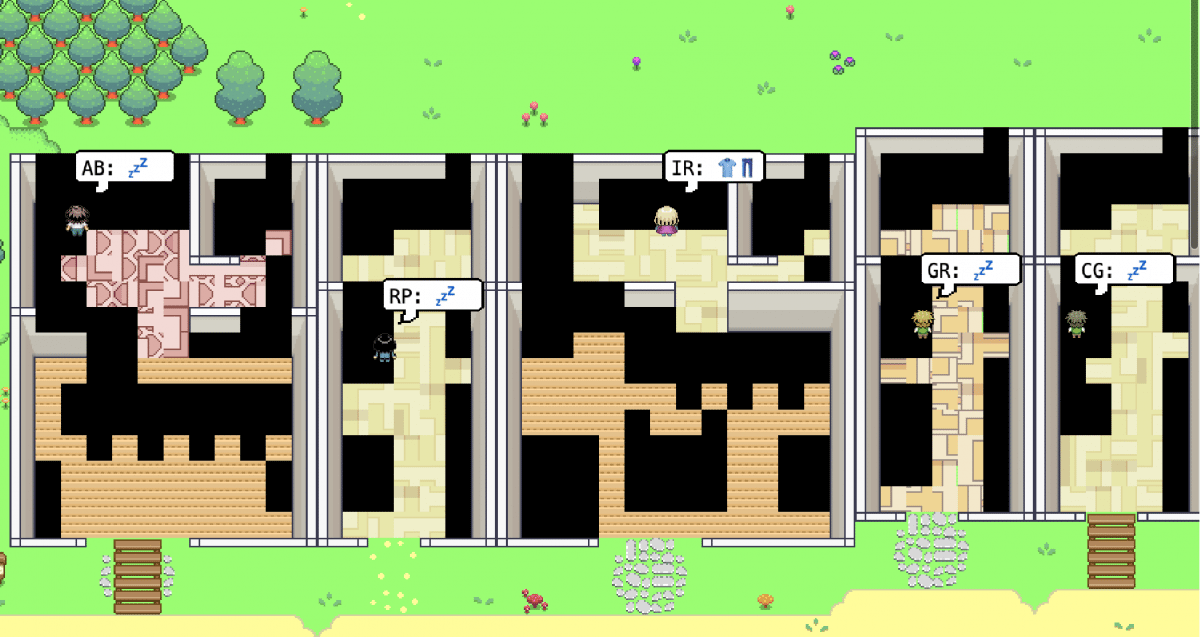

Researchers from Google and Stanford University have developed a simulation to control the communication and behavior of simple autonomous software agents. They used ChatGPT to create 25 software agents in a virtual community, known as “Smallville”. These agents exhibited “realistic human behavior” and “emergent social interactions”. For instance, an agent’s plan to celebrate a Valentine’s Day party led to the simulated person sending invitations to other agents and decorating the room by themselves.

The language model that controls the agents serves as input to other agents, similarly to a text-based role-playing game. The system has a limited “context length” of 2,048 tokens. The researchers linked the language model to a database containing the “memory stream” of each agent to act consistently. The file contains the agent’s “observations”, including summaries of events and interactions, as well as plans and intentions. The researchers used ChatGPT to generate both abstract summaries and plans.

The authors of the paper did not ascribe intelligence to the software agents, although similar autonomous systems are currently gaining attention. The system is too slow and computationally intensive for use in computer games. The researchers suggest using the software for designing online communities, for example, to test the effects of certain rules and behaviors. However, the fundamental problem of all applications of large language models remains: the authors do not know whether the system is stable.

The simulation created three “emergent” behaviors that arose solely from interactions between agents: “information-diffusion” agents passed information when necessary, the coordination of actions among multiple agents, and establishing new relationships with each other. The researchers were able to verify this directly during the ongoing simulation.

In conclusion, the system works efficiently to supplement texts and create fictitious scenarios. However, there is a risk that the language model will hallucinate or get out of hand linguistically. Therefore, the stability of the system remains an issue.