Apple’s iMessage platform is set to integrate its controversial nude image scanner into more components of its operating systems. This move is part of a wider expansion of communication security in Apple’s upcoming iOS 17 and macOS 14 and iPadOS 17. The new features will use AI algorithms to recognize problematic content locally, with communication security extending into system-wide photo pickers and third-party apps. The new operating systems will also include video monitoring for the first time. Additionally, communication security will be extended to the local file-sharing service AirDrop and contact posters that appear when someone calls.

The precise nature of the integration into third-party applications is yet to be confirmed. It is also unclear whether the Finder file management application will be subject to the new scanning practices. Apple has said that communication security will be enabled gradually through spring 2023, aimed at protecting children from viewing or sharing nudity. To access this feature, the child’s Apple ID must be part of the parent’s family group, with settings controlled via screen time function.

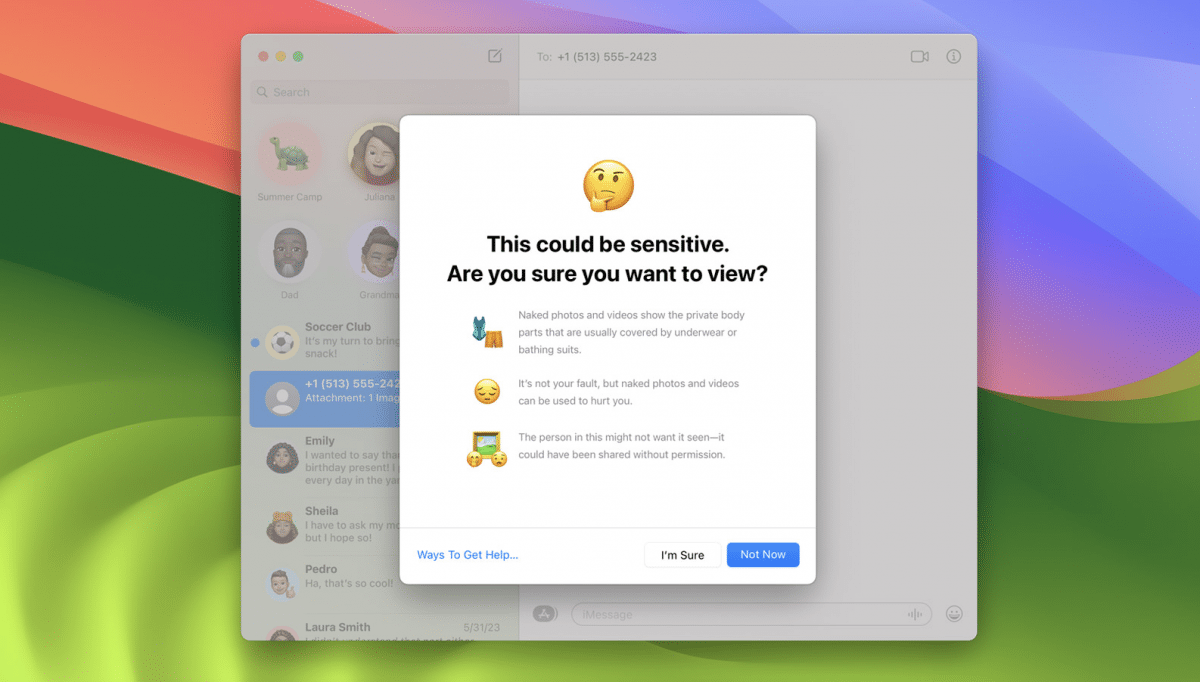

Apple controversially announced plans for a child sexual abuse material (CSAM) scanner in addition to the image scanner. However, it later dropped the plans in the face of significant opposition. Furthermore, the firm did not implement an alarm for parents when naked images were detected, despite plans to do so at an earlier stage. Nonetheless, adults can activate a sensitive content warning to hide problematic content in new operating systems.